I haven’t got the chance to get fully immersed in this feature yet but as far as I know, these are the different things that you can force. Speaking of limits, Screaming Frog has a 500 URL limit for the free version. How deep you want to go into the website with crawl depth which basically just means how many links you’re going to follow into the website. This is how many pages you might want to crawl on a website. In the case of Extractions, for instance, not extracting page titles or not extracting meta descriptions, you could change that here. This tab is used if you want to only crawl certain types of things like Images, CSS, certain types of Links, AMP, Pages, and Extractions. This scheduling is done through the user interface if you'd rather operate the SEO Spider through the command line, read our command-line interface guide. This is to avoid any user involvement, such as the application starting in front of you and prompting you to select options, which would be weird. When data is scheduled to be exported, the SEO Spider will execute in headless mode (that is, without an interface). As a result, we propose taking into account your system's resources as well as crawl timing.

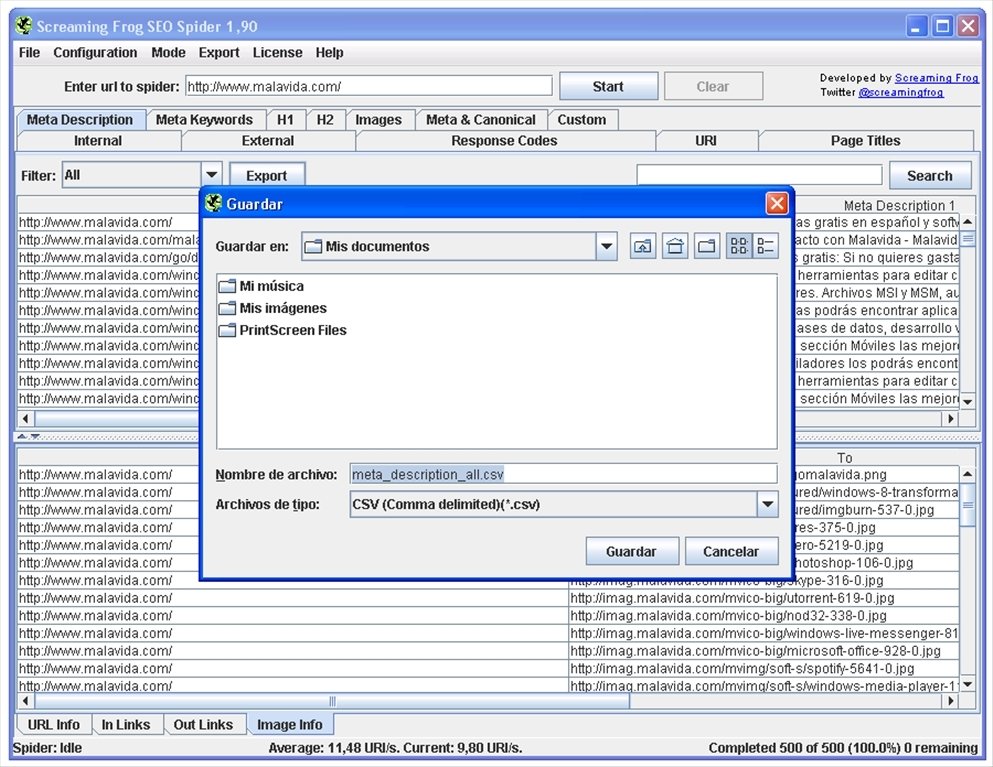

As a result, if multiple crawls overlap, multiple instances of the SEO Spider will run at the same time, rather than waiting for the preceding crawl to the finish. Please review our tutorial on how to save, open, export, and import crawls.įor a scheduled crawl, a new instance of the SEO Spider is started. Crawls can be accessed via the application's 'File > Crawls' menu after the scheduled crawl has been completed. There's no need to save crawls in scheduling if you're using database storage mode because they're saved automatically in the SEO Spiders database. In using scheduling, there are a few things to keep in mind. There’s also the “Save” tab where you can manually save a crawl or press “Control” + “S” for the shortcut. So if you save a crawl or you want to load a crawl that you’ve done, you can do that. This tab will be used when you want to open a recent crawl that you’ve done. Now before I go into what you’re going to want to look at in terms of all the different errors, let me just go over the first row. Generally, you’re going to be filtering by HTML since you just want to see the pages that are on the website so you don’t really care too much about the images or the PDFs, you can do that if you’re trying to see if they have certain things on the website like pdfs but for the most part, you’re going to filter by HTML so you can see all of the HTML pages on the website. Now there’s a bunch of different filters that you can use: What you’ll see when you begin is all of the URLs show up on your crawl, these are all the different things on the website.

To put things into perspective, let’s use my website,, and press start. If you select 'Explore All Subdomains,' the spider will crawl any links it discovers to other subdomains on your site. You must update the parameters in the Spider Configuration menu to crawl more subdomains. Any further subdomains encountered by the spider will be treated as external links. Screaming Frog only crawls the subdomain you enter by default. To get started crawling your entire site, including all subdomains, you'll need to make a few minor changes to the spider setup. This makes file sizes and data exports a little easier to control. When crawling larger sites, it's sometimes best to limit the crawler to a subset of URLs in order to gather a decent representative sample of data. It's a good idea to examine what kind of information you're looking for, how big the site is, and how much of the site you'll need to crawl in order to get it all before starting a crawl. However, in this guide, I will be using the free version since we don’t really need the paid version because a lot of the things can be done without having Google Analytics or Google Search Console integrated. The problem with not being able to do those things is that you’re going to have a harder time being able to pull all the analytics into the crawl. One thing to take note of with the free version is the fact that you can’t do Google Analytics Integration and Search Console Integration. You can purchase a license to remove the 500 URL crawl limit, expand the setup options, and gain access to more advanced capabilities. Then, double-click the SEO Spider installation file you have downloaded and follow the installer's instructions. Simply click the download button to get started: Download Now. It runs on Windows, Mac OS X, and Ubuntu. To get started, download and install the SEO Spider, which is free and allows you to crawl up to 500 URLs at once.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed